Dewey, Expert Systems, and Education

Brief ponderings on John Dewey, Artificial Intelligence, and education.

Note: This post should be read as a spark of interest rather than a systematic and full exploration of the themes presented here, as I am fully aware this topic deserves a full-length book exploration. Take this as loosely structured thoughts on A.I. and the education system.

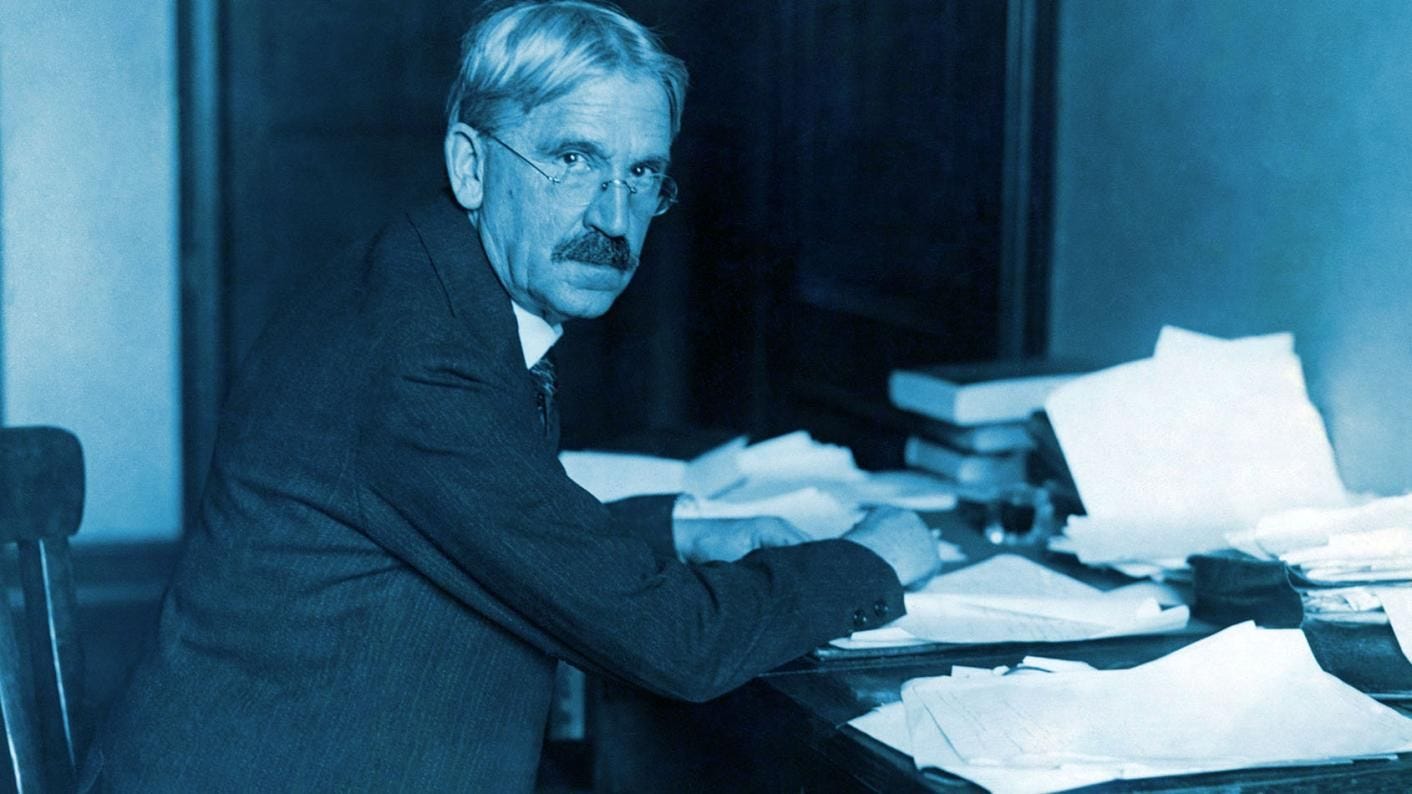

Who was John Dewey:

To the few who are unaware of “who” John Dewey was, Dewey was an American philosopher, best known for his philosophical contributions to education, however in other circles he was known as a (functionalist) psychologist and was one of the few core members of the philosophical movement/tradition known as American Pragmatism (Peirce, James, and Dewey). American Pragmatism as a movement and/or method of doing philosophy had an interesting ebb and flow of popularity and relevance, with the (then) new methodology of doing philosophy, Analytic Philosophy. Dewey’s influence and contributions, implicit and explicit, often get overshadowed by Dewey’s pivoting of ‘how’ one perceives and in turn does education.

Before focusing on student-centred education, traditional education emphasized rote memorization, drills, and a teacher-centred education, with the teacher literally and metaphorically the final say in all questions about the child’s education and the method. One can get a general gist of the educational world from one’s personal experience of the “traditional” classroom of heavy emphasis placed on standardized testing and data-driven education, which should be mentioned, is still ever present in today’s education field, “teaching to the test”, being a slogan for some educators.

A general outlook of Dewey’s influence on modern conceptions of education is the notion of the “child-centric” or “student-centric” form of teaching, which is more or less what “discovery” education is. “Discovery education” is the idea of having more experiential learning, rather than ‘rote’ education of memorizing facts and discrete forms of knowledge that may or may not have any direct relation to the student’s own ‘life-world’ (Husserl). The practice of student-centred teaching is mainly done through project-based learning, presentations, etc., the metaphor of taking a different path and ending up at the same destination (education outcomes), can be partly attributed to Dewey’s philosophy of education.

Some considerations of advancements in ‘expert systems’ (Artificial Intelligence):

The message will get lost if I do not state explicitly what I am driving with Dewey’s philosophy of education, concerning the current state-of-affairs in general public education in North America and the advances in A.I. (Artificial Intelligence). If the general education field does not address the question(s) concerning “A.I.” and the implications of unfettered ignorance surrounding A.I., “public education” as we know it, will not address the same concerns in the next ten years. This pronouncement can be perceived as mere “fear-mongering” and “anti-progressive”, however, we need to come to terms with ‘some’ of the implications A.I. in the field of education.

Now, before diving deep into the muck of A.I., education, and Dewey’s philosophy, one needs to get a general idea of what “A.I.” is and what it isn’t, as it is good practice to parse out some misconceptions of what A.I. is ‘doing’ rather than “what-we-think-it’s-doing” to avoid some misconceptions of what A.I. is.

The idea of artificial intelligence, in practice, is not remotely new, with an example coming from the first modern philosopher, Rene Descartes. Descartes posits an automata theory of a human being as a proto-form of what one ‘could’ consider an intelligent machine, at least from a mechanical point of view. In theory, one could construct a mechanical machine that does everything a human being could do, however, done purely through mechanical means. An example is the currently developed robots that can do physical operations a human being could perform. Obviously, on an intellectual level, the behaviour does not imply intelligence.

The general approach to “if it looks behaves and performs similar tasks to a human being then it must be intelligent” is considered a behaviourist or a functionalist stance, both in their approach to intelligence and consciousness. One could make the plea that the mere behaviour and appearance of apparent intelligence equals the A.I., robot, etc., possessing a form of intelligence of human consciousness, however, this view harkens back to the 20th-century behaviourist psychologist approach.

The best-known and most used A.I. system on the market is ChatGPT, which was developed by Open A.I. an artificial intelligence research organization. The model that ChatGPT and plenty of other A.I. tools available on the market use are what is called an “LLM”, or Large Language Model. The general gist of what a large language model (LLM hereafter) is, needs to be briefly explained. ChatGPT and other LLMs use a “large” input of data, in which the LLMs data it uses, is vast amounts of data from the internet, be it news articles, books, blog posts, etc. If there is text generated by a human being and freely available on the internet, there is a likely chance that these LLMs are using these data sets for the training of the given LLM. Heavy emphasis should be placed on these datasets, as ChatGPT is deriving its responses and trained data not from the internet in real-time, but from the scrapped text that it has been trained on, in a sense the responses you are receiving originated through the “black box” of “learned” data.

The large dataset of (supposed) natural language that these LLMs use is processed through statistical methods of prediction of a text. An ordinary example of these statistical/prediction models would be predictive text on mobile phones and web applications. A provided text exchange on ChatGPT will be provided as a basic example of how this system currently operates.

“Provide an answer to the question "Who was the first president of the United States of America?". Additionally, provide "how" you provided the answer through ChatGPT.”

“The first president of the United States of America was George Washington.

How ChatGPT Provided the Answer:

Training Data: ChatGPT has been trained on a vast corpus of text that includes historical documents, books, websites, and other written sources up until its knowledge cutoff date in September 2021. This training data includes widely accepted historical facts such as the identities of U.S. presidents.

Pattern Recognition: During training, the model learned to recognize patterns and associations between words and phrases. When asked about the first president of the United States, the model uses these patterns to generate a response based on the information it has learned.

Contextual Prediction: Given the specific query "who was the first president of the United States of America?", the model uses the context to predict the most appropriate answer. It identifies "first president" and "United States of America" as key terms and generates the historically accurate response "George Washington."

Statistical Likelihood: The model calculates the statistical likelihood of different possible responses based on the training data. "George Washington" is the most probable and contextually accurate answer given the input query.”

(June 19, 2024)

The explicit lesson we can derive from this response is that the LLMs are statistical prediction models of what could be considered the correct answer to the provided question. Now, much like human beings these LLMs can (and often do) provide incorrect answers. The zeitgeist of LLMs providing all and every conceivable (intellectual) question an equally (intellectual) answer does not mean it is providing “human reasoned” answers. The provided example and (brief) explanation posit that these LLMs are “function over form”. The “function over form” stance of “appearing” intelligence, therefore it is intelligent again lays a form functionalism. The functionalism of LLMs is done through being provided with an enormous dataset to use, in which the available dataset is then fed through these learning statistical and predictive models and gambling with a highly probable “intelligent” answer to the LLM user. It should be mentioned the question concerning what is considered a reasoned human response and intelligence will not be explored in depth in this article, as the debate of “whether a provided A.I. response can be reasonably compared to an intelligent/reasoned human response”, trends into the territory of philosophy of mind and epistemology.

Dewey Pragmatism and A.I. in Education

Now that the bulk of the background/foundation of “who” John Dewey was and what the current and popular landscape of Artificial Intelligence usage in 2024 has been established, the question that is looming, “how” does Dewey’s philosophy of education play a part in resisting the temptations of full-scale use of A.I. in education? I must admit that the usage of LLMs in one’s practice can be useful, however, much like economics, there is no such thing as a free lunch in one’s educational practice. Now, not to completely paint a bleak picture of A.I. and how “evil” it might be, there are advantages and time-saving ways LLMs can provide teachers in generating assessment pieces.

The pros: LLMs are very useful in generating quick multiple-choice questions, project ideas, generating summaries of provided material. The majority of teachers in the K-12 public school system are not dealing with things such as string theory, the subtle nuances of quantum mechanics, and the hard problem of consciousness, among other perennial philosophical and “hard” scientific questions. LLMs can and often do provide valid, usable, and reasonable teaching materials and formative assessment pieces, however, it is still up to the teaching professional to check and verify that the provided questions and more importantly the answers are valid and accurate.

The positives of using LLMs tend to be weighted more so towards the teacher in terms of assessment creation, however, there are plenty of positives for students in their education. Brainstorming essay ideas, summarizing articles and concepts, study guides, finding credible sources, etc., are among some of the potential positive resources that an LLM can provide, however…

The cons: The obvious negative is plagiarism and the LLM “doing the work”. The current debate of LLMs and education rests on the obvious misuse of LLMs in writing essays, written presentations, and any other take-home assignment or project a student might be provided. The debate in various states, provinces and school systems has been pondering on “how one goes about this new technology?”. I am in no real position to provide a concrete solution to this question, however, one stop-gap that could potentially stave off the cybernetic student is taking Dewey’s pragmatic education stance more seriously.

Know-how and Know-that: Changing our perspective on learning

As mentioned previously, I have no real or concrete solution to the ever-changing landscape of A.I., and digital technology, among other societal and economic conditions, in the classroom and learning, however, a change in one’s mindset in how one perceives and in turn conducts themselves in the classroom can mitigate some of the negatives effects of A.I.

Embodied in the classroom: being-in-the-classroom. I am jesting with the neologism “being-in-the-classroom”, however, this mere “jest” does hold more weight than one might think. These LLMs and other A.I. models are predicated on a highly organized syntactical and statistical method of conveying discrete “knowledge”, however, to assist with mitigating this over-reliance on propositional knowledge it is worth looking at Dewey’s emphasis on “know-how” rather than the “know-that”.

The “know-that”, is purely on propositional knowledge, which is to say that “I know that 2+2=4” and then proceed to provide a proof as to the way that such-n-such mathematical propositional is true and justified. “Know-how” is different from the form of knowledge, as it emphasizes skills and processes of dealing with the world, as shown in the writings of the phenomenologists (Heidegger, Sartre, Merleau-Ponty, among others), Gilbert Ryle, and John Dewey embedded educational philosophy. The point of education and learning, in general, is not about “look at how much propositional knowledge is stored in my brain”, rather it should be emphasized how this provided propositional knowledge as any relation to the “world”. I have heard multiple students, adults, and man-on-the-street utter such pronounces of “How does this relate to my life?”, and for the most part man-on-the-street does not need to have pure mathematics and knowledge of evolution to order a coffee, fix a car, or even knowing how to file their yearly tax returns.

The Deweyite educational stance should be upheld more so in the classroom, as classrooms are being flooded with digital technologies, supposedly there to help with their education more or less cut short of an authentic embodiment of knowledge that can be used in the real world. It would not be unreasonable to state that the thread of history and the student are being given a raw deal with a quick out with these LLMs providing ready-made essays and answers to assessments. One cannot take a Heideggerian hammer to these A.I. systems, as Pandora’s box has been opened and the world of LLMs has sprung out. I can reasonably say that this topic should be explored more in-depth in how one should change their mindset of having a more enactive and/or embodied approach to classroom instruction and general stance on public education, as the allure of the cybernetic child might be placing them at a disadvantage.

I am again fully aware that this post did not provide any sort of solution, however, I would like to think that it has provided some level of food for thought, as the landscape of this post-COVID-19 world has presented some existential questions in a variety of professional vocations and how does such-n-such activity and “if” we as a human race should fully embrace these new technologies. The questions raised in this post are not new by any stretch, however, these questions of “expert systems” replacing such-n-such jobs or brain power are highly relevant now and should be thought deeply on whether we should continue to accept these developing technologies as uncritically as we’ve been thus far.

I will leave it at that for the time being and will write more on this topic, regardless if 100 or 5 people decide to read and more importantly, deal with this emerging problem.